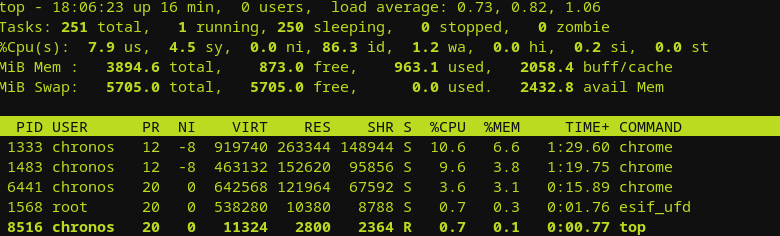

What laptop and OS would I rely on if working remotely was a necessary evil should a global pandemic impact…

Author: EPH

Computer enthusiast

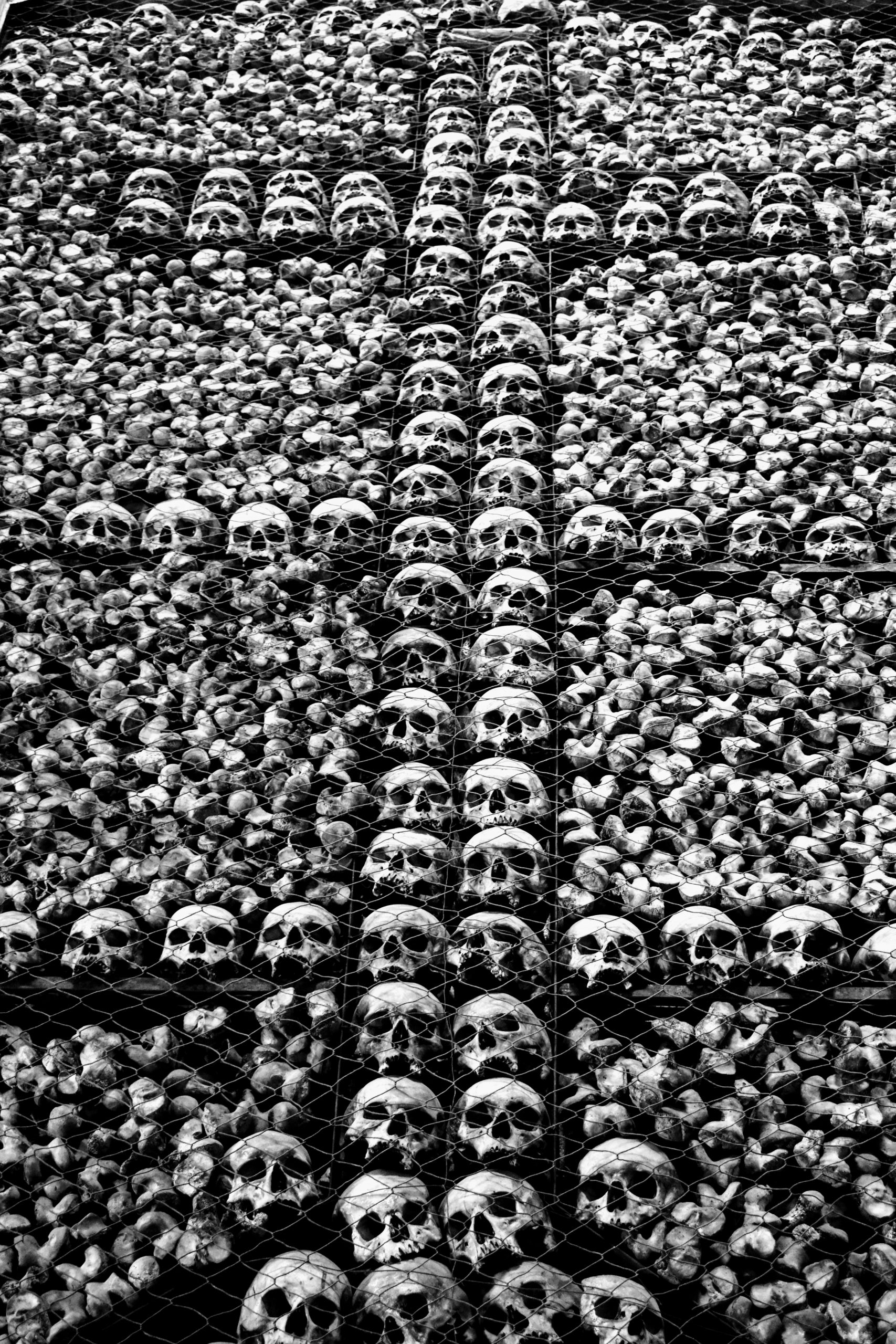

Behold An Ashen Horse

Is this the preamble to some very dark days? The news is somewhat muted in their coverage of the Novel…

Stay Awake All Night

Not far into 2020 and things are already getting weird. Politics have turned into a sideshow, the major news media…

Prepare for Rumors of War

The news is ramping up on the potential for bad things happening. This not great timing for many computer users…

Another Decade Ends.

2020 is here and with it comes a lot of possibilities. Instead of looking back at the last year’s notable…